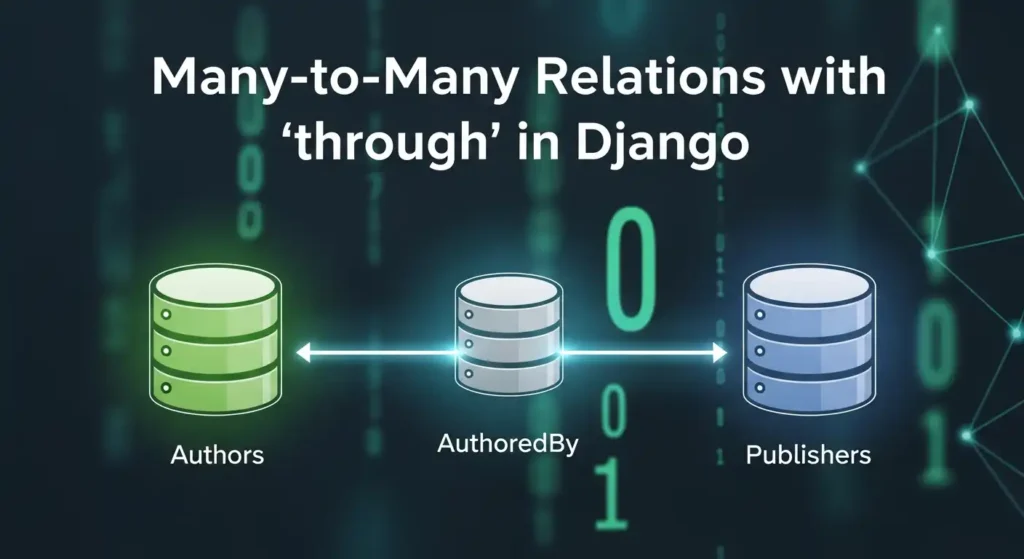

Python continues to dominate the programming landscape in 2025, and much of its success stems from its incredible ecosystem of libraries. Whether you’re building web applications, diving into machine learning, or creating stunning data visualizations, there’s a Python library that can accelerate your development process. In this comprehensive guide, we’ll explore the 20 most essential Python libraries that every developer should know about in 2025, organized by their primary use cases. General Purpose & Utilities 1. NumPy – The Foundation of Scientific Computing NumPy remains the bedrock of Python’s scientific computing ecosystem. It provides support for large, multi-dimensional arrays and matrices, along with a collection of mathematical functions to operate on these arrays efficiently. Why it matters in 2025: Use cases: Scientific computing, data analysis, image processing, financial modeling 2. Pandas – Data Manipulation Made Easy Pandas is the go-to library for data analysis and manipulation. It provides data structures like DataFrames and Series that make working with structured data intuitive and powerful. Key features: Use cases: Data cleaning, exploratory data analysis, financial analysis, business intelligence 3. Rich – Beautiful Terminal Output Rich has revolutionized how we think about terminal applications. It brings rich text, tables, progress bars, and even images to the command line. What makes it special: Use cases: CLI applications, debugging output, terminal dashboards, developer tools 4. Pydantic v2 – Type-Safe Data Validation Pydantic v2 represents a major leap forward in Python data validation. Built on Rust for performance, it uses Python type hints to validate data at runtime. Why developers love it: Use cases: API development, configuration management, data parsing, form validation 5. Typer – Modern CLI Development Typer makes creating command-line applications as easy as writing functions. From the creators of FastAPI, it brings the same elegant design philosophy to CLI development. Standout features: Use cases: Command-line tools, automation scripts, developer utilities, system administration Web Development 6. FastAPI – The Future of Web APIs FastAPI has quickly become the preferred choice for building modern web APIs. It combines high performance with developer-friendly features and automatic API documentation. What sets it apart: Use cases: REST APIs, microservices, real-time applications, machine learning APIs 7. Django – The Web Framework for Perfectionists Django remains a powerhouse for full-stack web development. Its “batteries included” philosophy and robust ecosystem make it ideal for complex applications. Core strengths: Use cases: Content management systems, e-commerce platforms, social networks, enterprise applications 8. Flask – Lightweight and Flexible Flask continues to be popular for developers who prefer a minimalist approach. Its simplicity and flexibility make it perfect for smaller applications and microservices. Why it endures: Use cases: Microservices, API prototypes, small to medium web applications, educational projects 9. SQLModel – The Modern ORM SQLModel represents the evolution of database interaction in Python. Created by the FastAPI team, it combines the best of SQLAlchemy and Pydantic. Revolutionary features: Use cases: Modern web APIs, type-safe database operations, FastAPI applications 10. httpx – Async HTTP Client httpx is the modern replacement for the requests library, bringing full async support and HTTP/2 capabilities to Python HTTP clients. Advanced capabilities: Use cases: Async web scraping, API integrations, microservice communication, concurrent HTTP requests Machine Learning & AI 11. PyTorch – Deep Learning PyTorch has established itself as the leading deep learning framework, particularly in research communities. Its dynamic computation graphs and Pythonic design make it incredibly intuitive. Key advantages: Use cases: Deep learning research, computer vision, natural language processing, reinforcement learning 12. TensorFlow – Production-Ready ML TensorFlow remains a cornerstone of machine learning, especially for production deployments. Google’s backing and comprehensive ecosystem make it a solid choice for enterprise ML. Enterprise features: Use cases: Production ML systems, mobile ML applications, large-scale deployments, computer vision 13. scikit-learn – Traditional ML scikit-learn is the gold standard for traditional machine learning algorithms. Its consistent API and comprehensive documentation make it accessible to beginners and powerful for experts. Comprehensive toolkit: Use cases: Traditional ML projects, data science competitions, academic research, business analytics 14. Transformers (Hugging Face) – NLP Revolution Transformers has democratized access to state-of-the-art NLP models. The library provides easy access to pre-trained models like BERT, GPT, and T5. Game-changing features: Use cases: Text classification, language generation, question answering, sentiment analysis 15. LangChain – LLM Application Framework LangChain is the go-to framework for building applications powered by large language models. It provides abstractions for chaining LLM calls and building complex AI workflows. Powerful abstractions: Use cases: Chatbots, document analysis, AI agents, question-answering systems Data Visualization 16. Plotly – Interactive Visualization Plotly leads the way in interactive data visualization. Its ability to create publication-quality plots that work seamlessly in web browsers makes it invaluable for modern data science. Interactive capabilities: Use cases: Dashboard creation, scientific publications, financial analysis, interactive reports 17. Matplotlib – The Visualization Foundation Matplotlib remains the foundation of Python visualization. While other libraries offer more modern interfaces, matplotlib’s flexibility and comprehensive feature set keep it relevant. Enduring strengths: Use cases: Scientific publications, custom visualizations, academic research, detailed plot customization 18. Seaborn – Statistical Graphics Made Beautiful Seaborn builds on matplotlib to provide a high-level interface for creating attractive statistical graphics. It’s particularly strong for exploratory data analysis. Statistical focus: Use cases: Exploratory data analysis, statistical reporting, correlation analysis, distribution visualization 19. Altair – Grammar of Graphics Altair brings the grammar of graphics to Python, allowing for declarative statistical visualization. It’s particularly powerful for quick data exploration. Declarative approach: Use cases: Rapid prototyping, data exploration, statistical analysis, simple interactive plots 20. Streamlit – Data Apps in Minutes Streamlit has revolutionized how data scientists share their work. It allows you to create beautiful web applications with just Python code, no web development experience required. I have created a dashboard with Streamlit blog, please see here. Rapid development features: Use cases: Data science prototypes, ML model demos, internal tools, executive dashboards Choosing the Right Libraries for Your Project When selecting libraries for your Python projects in 2025, consider these factors: Web Development: Data Science: AI Applications: CLI Tools: The Future of Python Libraries