What Is CUDA?

If you’ve ever wondered how your computer manages demanding tasks like video editing, 3D rendering, or even AI applications so smoothly, there’s a good…

Read articleArchive

If you’ve ever wondered how your computer manages demanding tasks like video editing, 3D rendering, or even AI applications so smoothly, there’s a good…

Read article

Look, I’ll tell you the truth. When I first learned about “composite APIs,” I assumed it was just another catchphrase used by consultants to…

Read article

Recently, I appeared for an interview, and I am sharing the questions and answers that were asked during the session. 1. Fibonacci Series in…

Read article

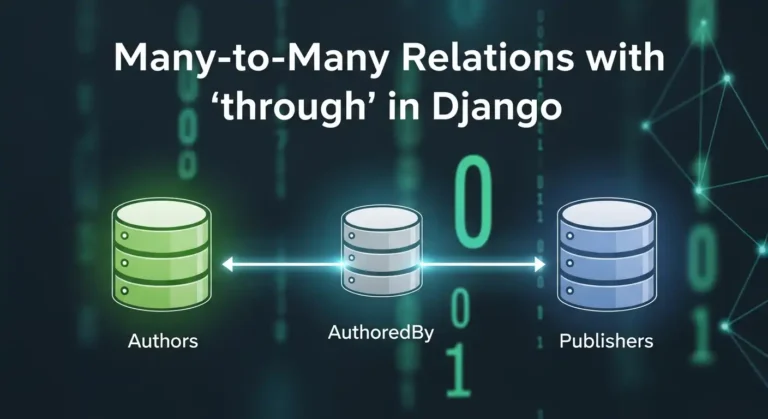

Hey there! If you’ve been working with Django for a while, you’ve probably used many-to-many relationships. They’re great, right? But have you ever felt…

Read article

Hi guys, recently I gave an interview at a startup company. I can’t reveal their name, but I am posting the questions they asked…

Read article

Google CEO Sundar Pichai recently appeared at the White House as part of the AI Education Taskforce. He discussed Google’s commitment to helping students…

Read article

Google has announced a major strategic partnership with Reliance Intelligence to expand access to AI across India. As a result, Jio Unlimited 5G users…

Read article

First West Credit Union, one of Canada’s largest with over 280,000 members, has rolled out Microsoft 365 Copilot to all 1,300 employees, becoming the…

Read article

Hi guys! Today I’ll tell you about the top 5 AI web browsers available in the market and which one is the best.The way…

Read article

When I first started working with FastAPI, I was blown away by how quickly I could get a simple API up and running. But…

Read article