Ordinary Least Squares

Most likely, you have previously encountered a trendline on a scatter plot. Through a cloud of dots, that straight line? It was unable to…

Read articleArchive

Most likely, you have previously encountered a trendline on a scatter plot. Through a cloud of dots, that straight line? It was unable to…

Read articleImagine you’re a restaurant owner. You notice that on warmer days, more people buy ice cream. If you could quantify that relationship, you could…

Read article

If you’ve ever wished you had a brilliant coding teammate available who knows your entire codebase inside and out, Claude Code might be exactly…

Read article

Hallucination (General): Experiencing things that aren’t really there, seeing, hearing, or feeling something that doesn’t exist in reality. Your brain creates sensory experiences without…

Read article

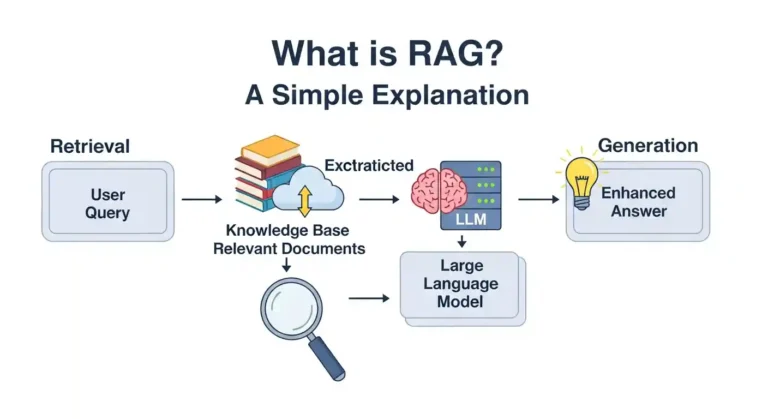

So you’ve undoubtedly heard the term “RAG” thrown around in AI chats and are wondering what it means. Don’t worry, it’s not as complicated as it seems, and I’ll explain it in plain English. The Basics RAG stands for Retrieval-Augmented Generation. I know that sounds super technical. But here’s the thing: it’s actually a pretty clever…

Read article

I recently built a Netflix clone without writing most of the code myself. Before you close this tab thinking I’m advocating for replacing human…

Read article

Are you usually confused about the difference between deep learning and machine learning? You’re not alone! These terms are frequently used interchangeably, but they…

Read article

The world of software development is changing faster than ever, and AI coding tools are leading the charge in 2025. These powerful assistants can…

Read article