Building Real-World APIs with FastAPI

When I first started working with FastAPI, I was blown away by how quickly I could get a simple API up and running. But…

Read articleInsights

When I first started working with FastAPI, I was blown away by how quickly I could get a simple API up and running. But…

Read article

So OpenAI just luanch a browser. Not a Chrome extension, not a chatbot widget an actual, full-fledged web browser called ChatGPT Atlas. And honestly?…

Read article

Python 3.14 came out on October 7, 2025, and it has a lot of useful and powerful features that make coding easier, faster, and…

Read article

Hey there! So you want to work with files and folders in Python? Maybe automate some boring stuff? Well, the OS module is going…

Read article

Think of a dictionary as a real-life address book. You don’t flip through every page to find someone; you look up their name (key) to instantly…

Read article

FastAPI runs on Uvicorn, an ASGI server made for Python code that runs at the same time. Django is older and has more features,…

Read article

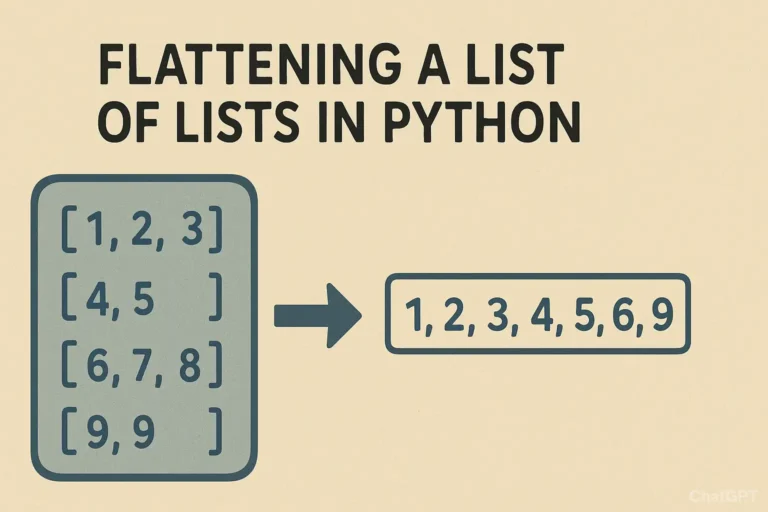

You open one list, only to find another list inside, and then another. In programming, we call this a “list of lists.” While this…

Read article

Hello Python enthusiasts! Today we will deep dive into one of the most common operations in Python programming: merging dictionaries. Whether you’re working with…

Read article

Today, we’re going to break down yield into simple, digestible pieces. By the end of this article, you’ll not only understand what it does but also…

Read article

Command-line tools are essential for developers—they’re fast, lightweight, and automate repetitive tasks. In this tutorial, we’ll build a File Organizer CLI tool in Python…

Read article